Robot Factory AR - Giving Middle Schoolers a Reason to Code

Robot Factory is a socially competitive mobile learning game targeting middle school ages. Players can learn and practice computer science concepts to build and program virtual robots and compete against friends in a variety of social game scenarios. The game is available for Android, iOS, and WebGL (browser) platforms. The experience is composed of a three part narrative, including Tutorials, Coding Challenges, and Rewards.

VR Fitness Bike - Cycling Interface and Software Application

For this lab project my team customized a cycling trainer and standard bicycle to include sensors for wheel tracking, steering, and motorized tension resistance automation. The resistance of the bicycle trainer adapts to the terrain of the environment simulation. This environment is designed to match high intensity interval training kin to that of spin classes.

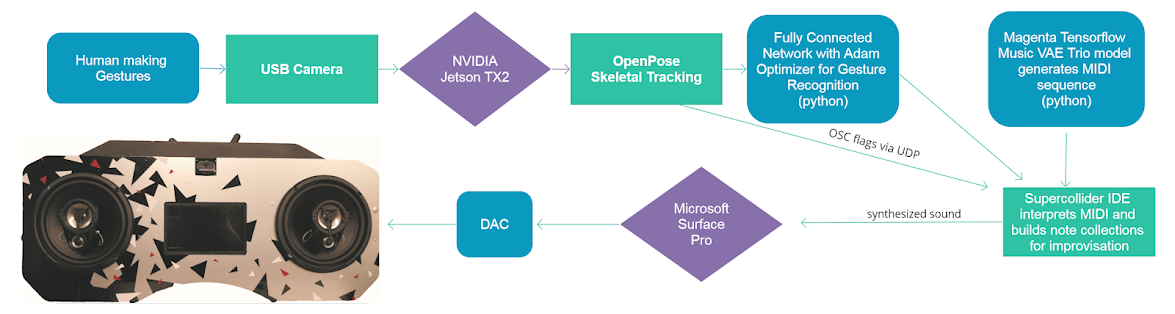

Mapping Music to Motion - A Computer Vision Music System

We created a musical interaction system that utilizes three machine learning models for skeletal tracking, gesture recognition, and music generation. We embodied this A.I. system into a boombox. This project took place in our Hardware Software Lab at the Global Innovation Exchange at University of Washington.

Crypto-currency Rewards for Eco-Friendly Behavior

This case study and in-depth process report is the result of a design thinking exercise in reducing single occupancy vehicles in the urban Seattle area. Our solution aims to provide incentives and value to people’s commuting experience by tracking and rewarding bus riding. This approach aims to reward the various stakeholders in the city’s flow of traffic, including the economy of businesses, people, and organizations.

Vox Augmento : An Improvisable Sampler Interface

New technology is a lever for creative expression. In this award-winning weekend hackathon project we asked how to exploit the advantages of VR and holographic interfaces musically.

Husky Dawg Daze Photo Booth

This photo booth installation utilizes sound and tracks hand motion to displace stochastic polygon shapes and trigger still photos. Video from the Kinect is mapped as a texture to the shapes. New students took nearly 1,400 photos over the course of 4 hours during the event. A live feed of the photo booth was broadcasted and projected to the inside and outside projectors via UDP.

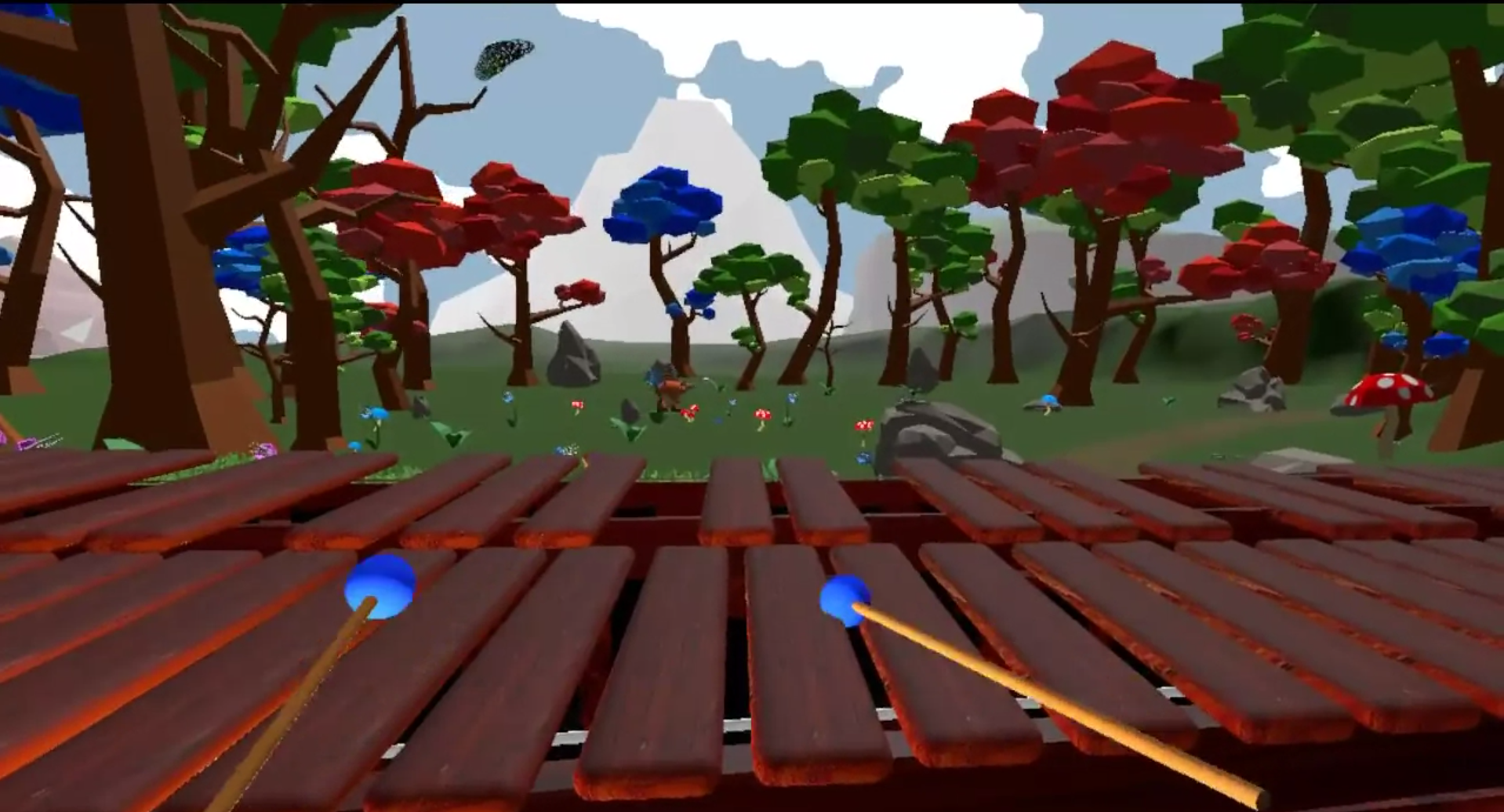

Melody: An Expressive VR Marimba (NOW on STEAM!)

For this project, I was able to recreate the audio engine with the puredata patching environment. FMOD was too latent, and I was able to patch an 8 voice multi-sampler that builds inside of Unity. We were able to get some good articulation and Evie Powell of Verge of Brilliance wrote a fun MIDI tracker. I'm not a huge fan of skeuomorphic interfaces, but anything too big to lug around is a great idea.

Optopoculus Max - Seattle VR Hackathon V

We sought to change the way people approach melodic and rhythmic riffing in music - by thinking of sequences and collision course shapes in holographic space vs. discrete events occuring linearly. Innovative human computer design expedites the skill and dexterity musical expression often requires. Multidimensional interfaces offer new ways to visualize sound and music and offer feed back we've dreamed of for generations.

Winner "Best Technical Achievement" - September 2016 Seattle VR Hackathon

This project implements an ambisonics system with audio in VR for a social atmosphere. VR is a very isolated experience, and we sought to make it more social by enabling surround audio via loudspeakers, rather than headphones. An abstract virtual reality sandbox experience was created in Unity for triggering and spatializing unusual sound objects. You can move objects in and trigger sound in 3D space. This proof-of-concept is a step towards experimenting with 3D musical interfaces for VR. The key technologies at work here at Unity, OSC (CNMAT package), ICST Ambisonics package, Max, a Perception Nueron MoCap suit, eight(8) 5" 2-way monitor speakers, and a DAC featuring 10 discreet outputs. I started this project with a team of five others in September 2016 at the Seattle VR Hackathon.

heARtSpace - Microsoft Hololens Seattle Hackathon 2016

Our team created a catheter integration and transeptal puncture simulation for the Hololens augmented reality device. The simulation allows a user to practice the procedure with a custom 3D scan of a patient's heart. The Hololens is a promising tool for medicine, with advancements in privacy, data, and detailed information. I designed the sound and audio user experience, integrated OSC control to Unity, designed the presentation layout, and composited the summary video for this project. (click for detail)

(click to drag this 360° video) I created the foley, composed the soundtrack, and implemented the sound for this 360 video made with Unity. FMOD Studio was utilized for implementation and the experience is spatialized with Microsoft's HRTF extension plug-in. This is a collaboration with Lou Ward and also works with a perception nueron mocap suit, placing you as the giant in real-time.

Winner "Best VR Sound Experience" - May 2016 Seattle VR Hackathon

Our team created this voice commanded spell casting wizard shooter for the HTC Vive with Unity 3D. Our team of six included four developers, a graphics artist and myself. I created all sound fx and composed the music during the hackathon. We incorporated Amazon Echo voice command technology that enabled the player to cast spells with voice commands.

SAM Remix March 2016

foundry10 was tasked to create an interactive music exhibit for Seattle Art Museum's Remix even in March 2016. The featured exhibit was New Republic by Kehinde Wiley, which positions contemporary African American subjects into classic European portraiture. I compiled beats from foundry10 students and interns with New York hip hop and 18th and 19th century classical music to create an interactive set of sounds. Guests could trigger and remix these styles together with no previous experience for a hands-on musical mashup.

Laptop Battle

I currently produce the Laptop Battle computer music competition in Seattle. In 2016 I designed a web app to conduct an online qualifying event. This web application utilizes the Meteor JS framework and allows competitors to upload videos and compete online. I designed the overall experience and custom timeline commenting interface for the video player, to allow contestants deeper, granular feedback. http://laptopbattle.com